To Analyze or Not to Analyze, That is the Question

Can we successfully improve reliability without analyzing reliability data?

In my personal experience, I have worked over many years with a lot of people who have worked hard to improve reliability. In the majority of cases, their starting point is a high level of reactive maintenance. They aim to utilize the principles of RCM, RCFA, condition monitoring, and improved maintenance practices to break out of the reactive maintenance cycle, and help the organization reduce costs and improve performance. The question is: how useful is reliability data analysis to the average reliability engineer?

You have probably all heard the quotes by W. Edwards Deming; “Without data you’re just another person with an opinion”, and “In God we trust, all else must bring data”. That is great, but what data do you need, where do you get it, and what do you do with the data once you have got it?

In many cases (the majority of cases), the message I receive is that there is so much to improve, so much low-hanging fruit, that we do not need data to tell us what to do.

Let’s explore that though a little.

Asset Criticality Ranking (ACR)

Although it is not data in the true sense, it is a numerical ranking that every reliability engineer needs to make informed decisions. I have written about how to develop the asset criticality ranking in previous articles, including the previous edition of MaintWorld, but by generating a score based on the reliability of an asset, the consequences of failure of the asset, and the likelihood of detecting the onset of failure of the asset, enables us to assess where our risks and opportunities for improvement lie.

The asset criticality ranking helps us to prioritize tasks so that we can make the best use of our precious time. The prioritization is based on addressing the assets that pose the greatest risk. We can prioritize Reliability Centered Maintenance (RCM) or Failure Modes Effects Analysis (FMEA) analysis work, condition monitoring, work orders, and much more

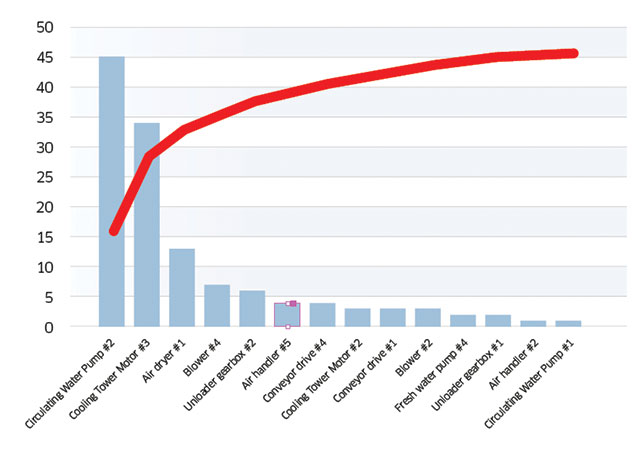

Pareto analysis; the “bad actor” list

Pareto analysis is also a tremendously valuable tool for identifying where you should focus your attention to have the greatest impact. The basic idea of Pareto analysis is that just 20% or fewer of your assets generate 80% or greater of your costs (waste, downtime, etc.).

If you go through your CMMS records you should hopefully be able to extract which assets have received maintenance work over the last few years. With that information you can create a special type of histogram, called a Pareto chart, which lists assets in the order of their impact; the assets that have failed most frequently on the left, ranked across the graph with the assets that have failed the least on the right.

You can also display it as a list; the “bad actor” list

Ideally you would also be able to create Pareto charts based on the nature of failure, the type of component, and if you were really, really fortunate, the root cause of the failure; but that would be hoping for too much…

Combining Criticality, Pareto, and condition

If you also have an effective condition monitoring program, then you have the perfect trio: you will know where you face the greatest risk (ACR), where you have experienced the most problems (Pareto), and which asset(s) are likely to require repair or replacement in the near future (CM).

So if you look out of your office window at 10,000 assets and you are wondering where to start, start with the assets that are at the top of the criticality ranking and bad actor list and condition-monitoring warning list.

Key Performance Indicators (KPIs)

KPIs are another type of data that reliability engineers should use. KPIs can tell you how your program is performing

(lagging KPIs), the performance you can expect in the future (leading KPIs), and where you have the greatest opportunity for improvement. This is a huge topic, but here are a few thoughts:

Rule #1 is that you have KPIs that are aligned with the goals of the organization. Operating Equipment Effectiveness (OEE) is an excellent KPI for a manufacturer.

Rule #2 is that you measure what you want to improve. People tend to focus on the metrics that are being recorded, especially if targets are established.

Rule #3 is that you use KPIs as described in the opening paragraph, and not to financially reward a person, otherwise you may find that there are some shenanigans when it comes to how data is recorded…

Mean Time Between Failure (MTBF)

One of the most common measures of reliability, and the effectiveness of the improvements being made by the program,

is the MTBF. But sadly, it is misused for a variety of reasons.

Just to ensure we are on the same page, if there were ten failures over a three-year period (36 months) then the MTBF would be 3.6 months (assuming the repair times are consistent in length). While it is a simple metric, unless there is a constant failure rate (i.e. the asset is not experiencing infant mortality or age related failures, and you are not changing the reliability within the analysis window), and you are focused on single failure modes, and you correctly treat voluntary removal from service (compared to actual failures), you must be very careful how you use MTBF.

There is more that could be said, and there is a great web site dedicated to this topic at www.nomtbf.com where you can learn more.

Using Weibull Analysis and Monte Carlo Simulations

OK, now we are getting serious. This is way beyond simple MTBF analysis.

This is a HUGE topic.

Let’s just say this; utilizing failure data, performing Weibull analysis, and performing Monte Carlo “simulations” allows a reliability engineer to understand the nature of failure (infant mortality, random, age-related) and the time-to-failure (the P-F interval) to create a more informed maintenance strategy. It also allows the engineer to build reliability block diagrams to estimate the future availability of the plant (or parts of it). And it enables the reliability engineer to answer all kinds of questions related to the optimal time to perform maintenance, estimating maintenance costs, assessing risk, and MUCH more.

You need to go beyond basic MTBF analysis to answer these questions, but it takes good data and an understanding of statistics to be successful.

Do You Have Good Data?

But the bulk of the preceding commentary is based on having good data. Are you sure the information in the CMMS has been recorded accurately. When there is a failure recorded against “Air dryer #1”, are you sure it was not “Air dryer #2”? If you utilize failure data, are you sure about the component/part/failure mode/root cause recorded? If you find yourself saying “the data is not accurate, but it is the only data we’ve got”, then you must proceed with great care!

Collecting Good Data

The information we would like to have includes:

Which asset failed (the maintainable item)

The component type (motor, gearbox, etc.)

The part (coupling, drive-end bearing, etc.)

The failure mode (bearing seized, gear tooth broken, etc.)

The consequence of failure (production loss, labour cost, parts costs, etc.)

The root cause (lack of lubrication, incorrect operation, etc.)

When it failed

It is wishful thinking that you will be able to collect all of that information unless you go to considerable lengths, and in many cases you may not know the root cause. But for sure, the more accurate information you have the better position you will be in to make informed decisions.

One of the ways to collect this data is via failures codes. But if a technician is confronted with 82 options they will choose the first option or “other”. A smart system will only provide valid options. If the system knows the motor failed, it should only offer failure codes relevant to motors, and you should further limit them to the most common failure modes.

What If You Don’t Have Any Data?

There are three things you can do:

1 Use anecdotal information to construct the asset criticality ranking, and talk to the maintenance technicians and operators about the equipment that has failed the most and the most common repairs & installations. You may have records of the components and parts purchased most frequently. It should be possible to get a pretty good “bad actor” list.

2 Use “common knowledge” to set the priority of the improvement projects. It is well known that precision lubrication, alignment, balancing, fastening, and cleaning will improve the reliability of rotating machinery. You need standard operating procedures, work management, spares management, and written work procedures and standards. The 5S principles will improve efficiency and morale. Training will improve skills and morale. And the list goes on.

3 Start collecting good data. Today.

While the majority of reliability engineers may not feel they need data, or that they do not have good data to work with, it is hoped that this article will explain why you can and should use what you’ve got, and begin a plan to start collecting data that will help you make more informed decisions (and prove the benefits of your program).

Jason Tranter, CMRP,

Mobius Institute, jason@mobiusinstitute

![EMR_AMS-Asset-Monitor-banner_300x600_MW[62]OCT EMR_AMS-Asset-Monitor-banner_300x600_MW[62]OCT](/var/ezwebin_site/storage/images/media/images/emr_ams-asset-monitor-banner_300x600_mw-62-oct/79406-1-eng-GB/EMR_AMS-Asset-Monitor-banner_300x600_MW-62-OCT.png)