The criticality spectrum: a means to focus our attention where it is warranted

Developing an asset criticality ranking (ACR) is an important part of any reliability and performance improvement initiative. The criticality ranking enables an organization to prioritize and justify a wide range of activities and investments.

Unfortunately, it is all too common to perform a very basic criticality analysis that does not allow decisions to be made (and has very little buy-in). And in the author’s opinion, it is also too common for people to perform an analysis that is far too detailed, in the form of RCM or FMECA.

The aim of this article is to propose a graduated approach to assessing criticality which makes best use of your time while providing the decision-making information you need.

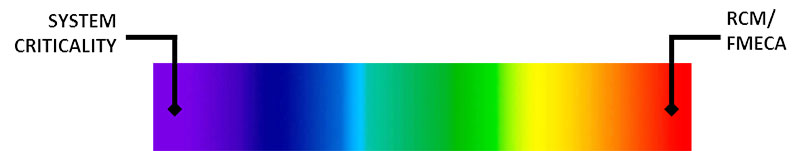

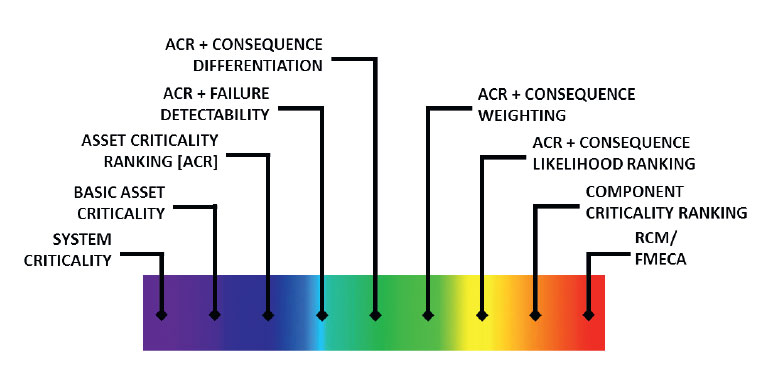

The criticality spectrum

The basic idea of the criticality spectrum is that we perform more and more detailed analysis based on criticality. At one end we have very little information to go on, i.e., a system criticality analysis. At the other end we have a great deal of information to go on, i.e., a detailed (but expensive) reliability centered maintenance (RCM) or failure modes, effects, and criticality analysis (FMECA).

We could start with the system criticality ranking and then perform more detailed analysis on the assets within a critical system. As you will soon read, we can perform a more and more detailed asset criticality analysis, and then a component criticality analysis on the critical assets, and finally the far more detailed RCM or FMECA analysis on the most critical assets or components. As we move from left to right on the spectrum we will determine which assets warrant a more detailed analysis, thus saving time and providing focus where it is necessary.

Let’s take a closer look.

Basic system criticality ranking

The easy place to start with criticality analysis is to assign a criticality ranking to each system. If the failure of the system would result in serious consequences, then we would give it a high system criticality ranking. The normal approach is to therefore assign every piece of equipment within that system the same high ranking. While it is an okay place to start, it simply does not provide the granularity we need. To begin with, we can examine the assets within the critical systems to achieve the desired level of detail.

Basic asset criticality ranking

Our minimum goal should be the asset criticality ranking: what do we really need?

At one extreme, which is all too common in the author’s experience, is to assign either “critical,” “essential,” or “nonessential” to each asset. While better than the system criticality ranking, such a simple ranking again does not provide the granularity we need to make the key decisions that must be made. But rather than analyzing every asset, we can now dig deeper on the “critical” assets.

Asset Criticality Ranking

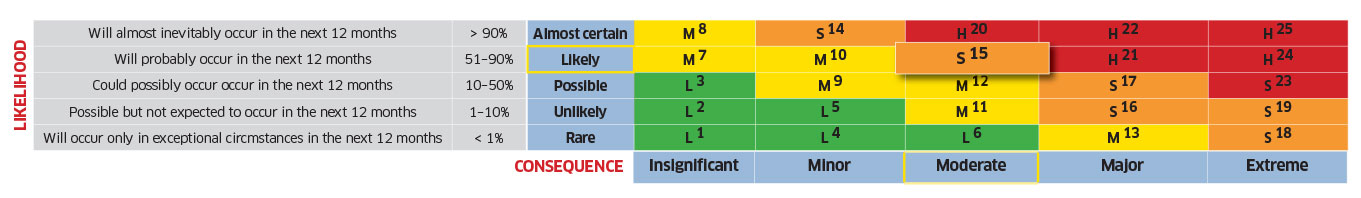

Instead of a crude asset criticality ranking, we can define a scoring system that differentiates between different assets. The score can be defined as a combination of the consequence of failure and the likelihood of failure.

ACR = Consequence x Likelihood

In order to provide structure and accountability to the process, we need a scoring system:

- The consequence could be scored from 1 to 5. If there is a chance of injury or death, the consequence would be scored ‘5.’

- The likelihood of failure will be scored from 0 to 1. It is the opposite of reliability. If there is a very high likelihood of failure occurring, it would be given a score of ‘1.’

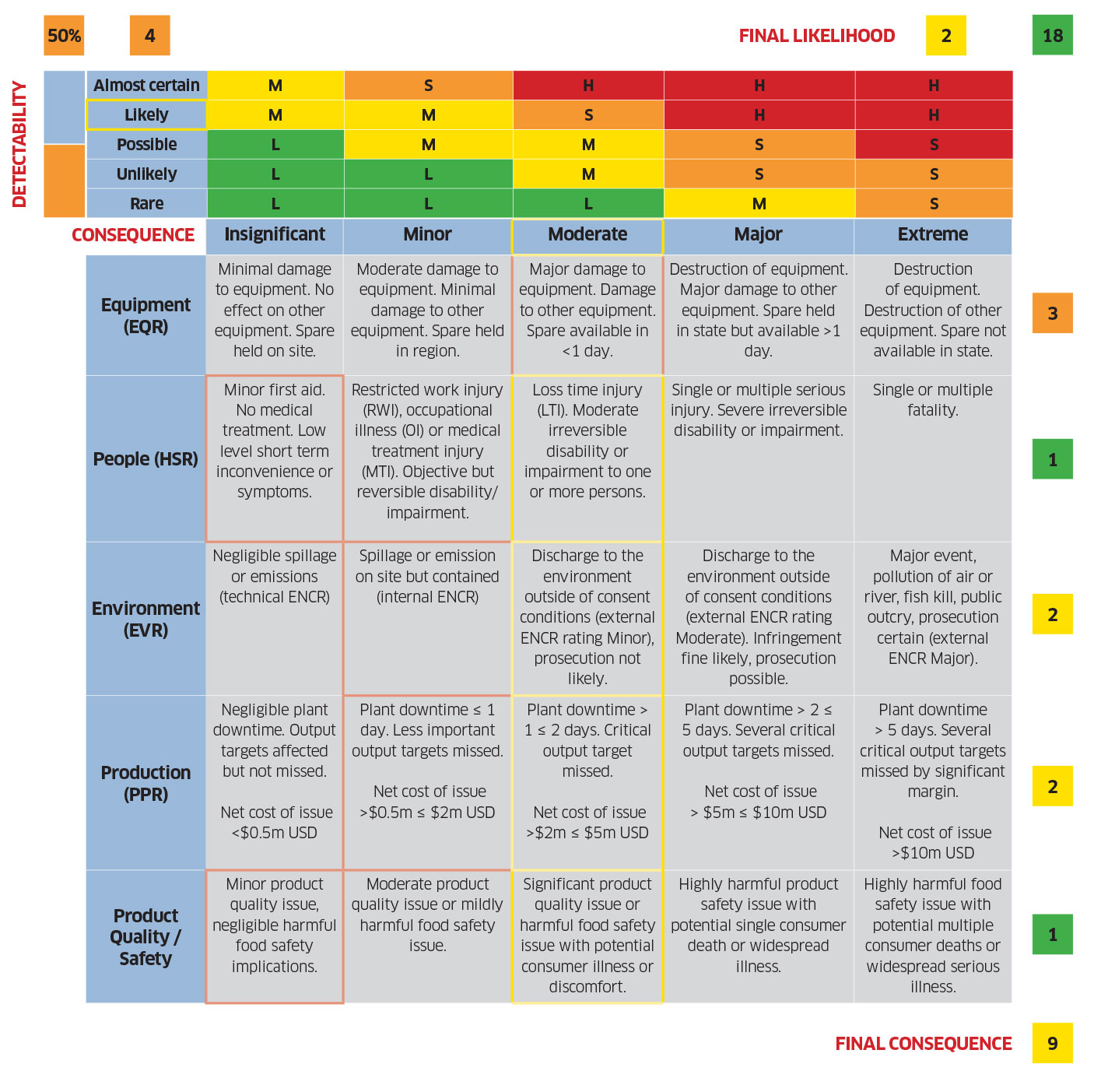

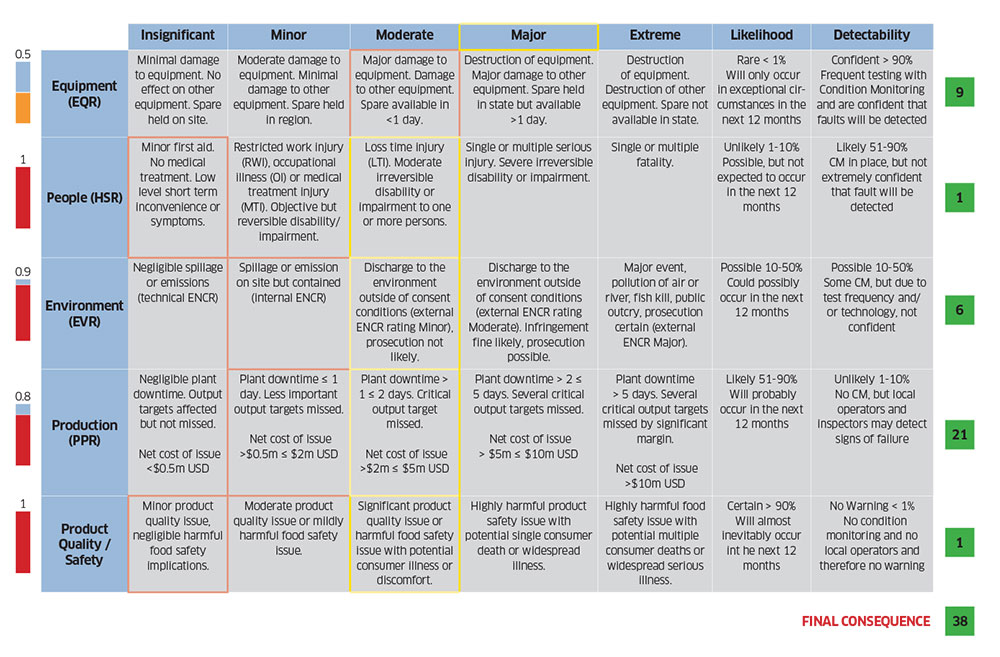

Table 1 illustrates how the likelihood of failure can be combined with the consequence of failure with a basic scoring system.

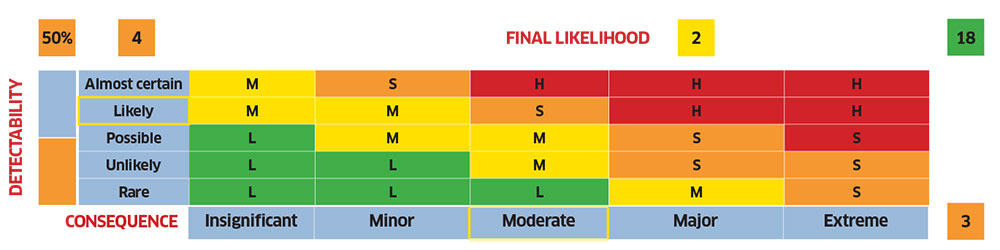

Asset criticality + failure detectability ranking

We should go one extra step. It is insufficient to just consider the likelihood of failure based purely on reliability. If we have a means to detect the onset of failure, then we are in a better position to avoid the consequences of failure. The score can be redefined as a combination of the consequence of failure and the likelihood of undetected failure as shown in Table 2.

ACR = Consequence x Likelihood x Detectability

The detectability can be scored from 1 to 0. A score of ‘1’ would indicate that there is no chance of detecting the onset of failure; it would be a complete surprise. Note that this is not a ranking of whether such a failure mode was detectable; it is a ranking of the likelihood that the onset of failure would be detected.

Asset criticality + consequence differentiation

Scoring the consequences of failure is important. It is common to view a series of assets on a production line as having the same criticality because if any one of them failed, they would stop production. But we need to distinguish between those assets; they really do not have the same criticality—they do not pose the same risk. Therefore, we can develop a more sophisticated way of ranking the consequences of failure based on a range of factors including the likely maintenance cost, the cost of lost production, the impact on quality, the impact on the environment, and very importantly, the impact on the safety of employees or customers. We should therefore assess the risks and develop a scoring system in each area of risk identified. Table 3 shows an example of such a system.

Asset criticality + consequence weighting

There is an extra step we need at this point. Thus far we have given equal weight to each consequence of failure. For example, we are assuming that the death of an employee has the same consequence as the loss of production for five days. We need to do better. Therefore, we need to weight each consequence against the other. If each consequence is scored from 1 to 5, we should apply a weighting to each consequence category so that only safety-related consequences can score a maximum of ‘5.’ Depending upon your circumstances, the consequence score related to production loss may only achieve the maximum score of ‘3.’ It is up to you to make that determination.

Asset criticality + consequence likelihood ranking

We now have a much better idea of the consequence of failure on the assets that we have been analyzing. Unfortunately, the system is still flawed. While we are considering the different risks, we are assigning the same likelihood of occurrence to each of those risks. For example, it may be rare for the asset to fail resulting in injury or death, but more common for the asset to fail resulting in downtime. Therefore, we can assign the likelihood and detectability to each consequence of failure, as shown in Table 4. This requires more detailed analysis, but initially we would only perform that analysis on the more critical assets.

Component criticality ranking

Although it has not been clearly stated, thus far we have considered the asset as a combination of components: for example, a motor coupled to a gearbox which drives a pump. Now it is time to take the assets with the highest criticality ranking and split them up into individual components. It is quite likely that we will find that the motor has a much lower criticality ranking than the gearbox (assuming that it is expensive and will have a longer lead time), which may have a lower criticality ranking than the pump (if the failure of the pump could cause an explosion and harm to the environment).

With this information we can better determine which spares to hold, how to prioritize maintenance, where to employ condition monitoring, and much more.

RCM and FMECA

Once we divide our analysis into individual components, we will identify the components that pose the greatest risk to the organization (and to the employees and customers). Now it is time to perform more detailed analysis of each individual failure mode, the consequence of each individual failure mode, and the likelihood of each failure mode. This is traditional reliability centered maintenance (or failure modes, effects, and criticality analysis), but at least we have performed that analysis only where it is warranted.

Back to the criticality spectrum

And thus, we have our criticality spectrum, with very basic system criticality analysis at one end, and RCM/FMECA at the other. Taking such an approach will ensure that the critical components are given the attention they require without having to perform detailed analysis on each and every component within the facility.

Conclusion

While the above sequence has described the transition process from a very basic criticality analysis to a highly detailed analysis based on criticality, when time is available, we can circle back and review the ranking provided to the least critical assets, just to make sure we did not miss anything. This is part of the continuous improvement process. We should review the criticality assigned to all assets as improvements are made to reliability, our ability to detect the onset of failure, and the perceived consequences of failure. The key is to determine the criticality with as much detail as possible and then use that information to prioritize and justify everything from the reliability improvement process to the maintenance work that is performed on a daily basis.

![EMR_AMS-Asset-Monitor-banner_300x600_MW[62]OCT EMR_AMS-Asset-Monitor-banner_300x600_MW[62]OCT](/var/ezwebin_site/storage/images/media/images/emr_ams-asset-monitor-banner_300x600_mw-62-oct/79406-1-eng-GB/EMR_AMS-Asset-Monitor-banner_300x600_MW-62-OCT.png)