The Asset Criticality Ranking; Key to Any Reliability Improvement Programme

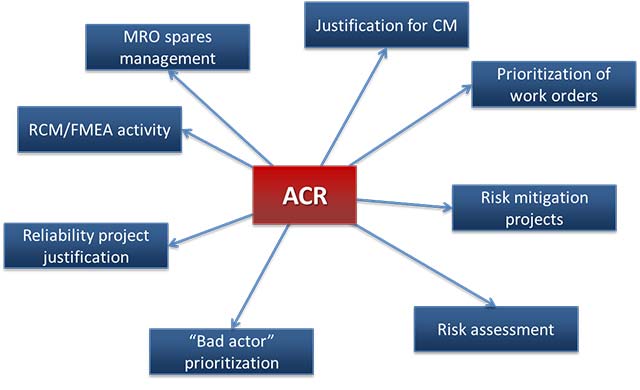

There are so many decisions you have to make when striving to improve reliability that require prioritization. Which machines do I include in a condition monitoring programme? Which spares do I need to keep on hand? How do I prioritize work requests? Which projects identified in my “bad actor” list do I work on first? Which assets do I include in my detailed RCM analysis? And you can probably think of others.

So how do you make those decisions? And how do you help senior management understand the risks the business is exposed to at the current time?

The answer? The asset criticality ranking.

What is Asset Criticality Ranking?

The asset criticality ranking, the way this author defines it, is the combination of three important elements:

- Consequences: The consequence of failure (including the impact on production, safety, environment, and quality, and the replacement/repair cost)

- Reliability: The reliability of the asset (the likelihood that it will develop a fault condition that would lead to functional failure such that we would experience those consequences)

- Detectability: The detectability of the fault condition (how likely it is that we will detect the onset of failure and thus avoid those consequences)

At one extreme we could have an asset that is unreliable, with no means of detecting imminent failure, with dire consequences if failure occurs. That asset poses a serious risk. We need to pay attention to that asset. That asset will achieve a high asset criticality ranking. At the other extreme, if the consequence of failure is very low, and the reliability is high, and we will be warned when failure is imminent, then it poses almost no risk and there is no point in taking any preventative action. We can probably let that asset run to fail.

Therefore, rather than having a criticality ranking scheme that simply declares that an asset is critical, essential, or nonessential (or some other categorization), it is important to develop a numerical scoring system that enables you to make important decisions.

How do You Determine the Asset Criticality Ranking?

While some maintenance or reliability professionals will attempt to set the ranking on each asset by themselves, in order to ensure that the best information is used, and to ensure that you have complete buy-in, you need to involve all the stakeholders: production/operations, safety, quality, environmental, maintenance, and reliability. You may also include someone from the engineering department.

In order to make this a reliable and repeatable process you need to agree to certain guidelines. Let’s take a look at the three areas we listed above.

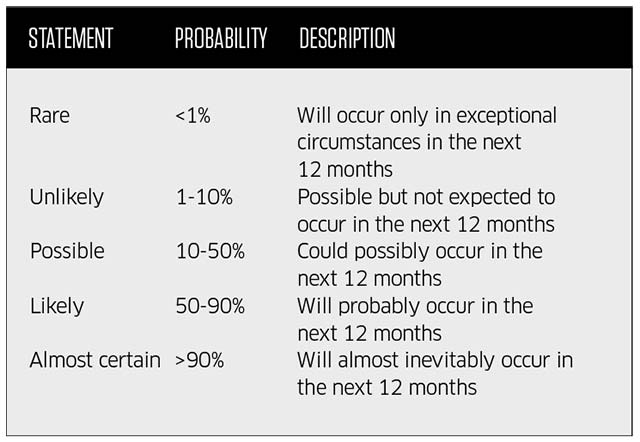

Ranking Reliability

We recommend that you establish a five tiered system and each asset should be ranked accordingly. Table below represents one example but you may choose to do it differently.

Ranking Detectability

While we could come up with a similar ranking process, we recommend you keep it simple and attempt to record the likelihood (as a percentage) that the onset of failure will be detected. A score of 100 percent would mean you are absolutely sure you will detect the fault conditions. Zero percent means you will have no warning at all, not even from local operators.

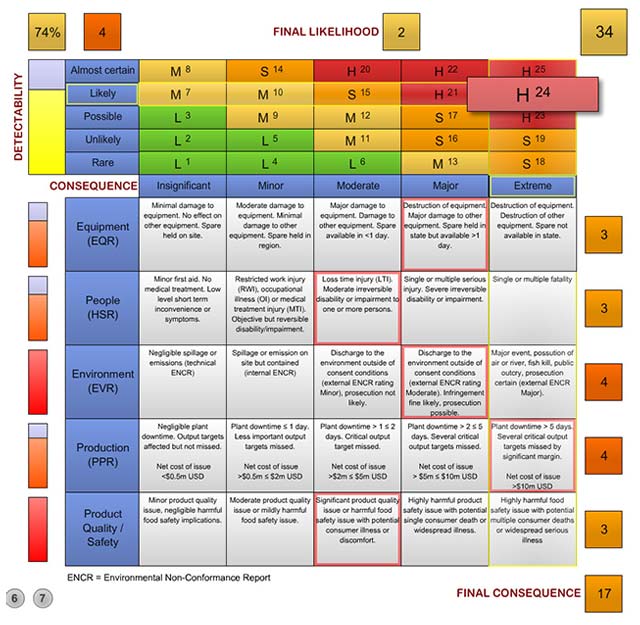

Ranking the Consequence of Failure

This is where it gets a bit tricky. We could simply define a score of between 1 and 5 that ranked the consequence, for example. insignificant, minor, moderate, major, and extreme. But you need a better definition.

Instead, the author would recommend that you define consequence of failure from different viewpoints: maintenance, plant safety, impact on production, impact on the environment, and quality (which could include impact on customers). You may have other areas that concern you, and you could include other consequences such as the damage to your brand.

You would then define five levels of severity in each of those areas. For example, for maintenance the five levels could be:

- Insignificant: Minimal damage to equipment. No effect on other equipment. Spare held on site.

- Minor: Moderate damage to equipment. Minimal damage to other equipment. Spare held in region.

- Moderate: Major damage to equipment. Damage to other equipment. Spare available in less than one day.

- Major: Destruction of equipment. Major damage to other equipment. Spare held in state but available in more than one day.

- Extreme: Destruction of equipment. Destruction of other equipment. Spare not held in state.

Of course, you need to come up with your own ranking according to your particular situation.

And you would do the same in each of the other areas. If this is done in complete cooperation with each of the stakeholders then there should be complete buy-in to the process and the final ranking.

The idea is that each of the stakeholders would define the consequence of failure for each asset as they understand it. You don’t have a maintenance person trying to assess the consequence of failure in terms of production loss, quality, or safety. You have experts in those subject areas so they should be the ones to define the consequence.

Are All Consequences Equal?

There is one more element to consider. You are likely to have a situation where the most severe consequence in each of the categories are not equal. For example, the most extreme consequence from a safety point of view could be a fatality, whereas from a maintenance point of view it is the destruction of equipment. Clearly a fatality is far worse. Therefore it is recommended that you scale or weight each of the consequence categories so that when each of the category experts assign a score they will be normalized.

What Do You Do With The Asset Criticality Ranking?

The good news is that you can basically do two things with the ranking that you have defined.

First, as mentioned in the introduction, you can use the ranking to help you make important decisions. It is always important that everything you do adds value to the business. If you have defined the asset criticality ranking process correctly, the assets that achieve the highest score will have the greatest impact on your business should they fail, and therefore that is where you should focus your attention.

Second, if you record all of the values for reliability, detectability, and consequence as described above, then you have a wealth of information that you can use to refine the decisions you have to make. The asset criticality ranking will reveal which assets have a high ranking due to poor reliability - they will be good targets for reliability improvement projects. It will reveal where assets have a high criticality due to lack of detectability - that is a good place to implement condition monitoring. And it will reveal where you have high criticality due to the severity of the consequences of failure - that may reveal issues that need to be addressed in order to mitigate those risks, for example adding redundancy so production can’t be affected.

A Living System

It is important to understand that this is not a task that is performed once and forgotten. As condition monitoring or other detection systems are implemented, the ranking should be updated. When the reliability of assets is improved, the ranking should be updated. If additional measures are made that mitigates some of the risks, for example improvements in spares management, the ranking should be updated. And as new assets are installed, and old assets a retired, the criticality ranking should be updated.

Conclusion

There is so much more that could be discussed in relation to this topic, but that is what training is for. It is hoped that this article will clarify the importance of the asset criticality ranking and provide you with a practical approach to developing the ranking.

![EMR_AMS-Asset-Monitor-banner_300x600_MW[62]OCT EMR_AMS-Asset-Monitor-banner_300x600_MW[62]OCT](/var/ezwebin_site/storage/images/media/images/emr_ams-asset-monitor-banner_300x600_mw-62-oct/79406-1-eng-GB/EMR_AMS-Asset-Monitor-banner_300x600_MW-62-OCT.png)